AI Magic - Amazing World(s) of Generative Art

the amazing, sometimes terrifying, always intriguing world of generative art and algorithms.

PROMPT: “Computer code runs away from debugger chasing after it” (VQGAN+CLIP), 1024x512, ~800 iterations

What is this creative chaos you ask? What if I told you an AI model generated this when I prompted it with the following text, “Computer code runs away from debugger chasing after it”.

A ridiculous text prompt, but ridiculous is irrelevant to an AI algorithm, and its up to the task! rendering a composition of bug-like creatures, keyboard keys representing the debugger? consuming the bugs with what looks like small pools of computer code running like liquid on a surface. This image rendered after 800 iterations of an AI GAN (generative adversarial network) based model named VQGAN+CLIP, which I will explain how to use later in this post.

This post is intended as an introduction to AI and generative algorithms primarily for artists, interested laypeople and/or beginning AI & ML practitioners. All are welcome.

Welcome to the wonderfully horrifying world of generative art. The recent announcement of the DALL-E-2 generative model by OpenAI, and the subsequent jaw-dropping twitter updates showing DALL-E-2’s art-generating magic by ‘a privileged few’ who had immediate access, provided enough motivation to use a portion of time off from work to discover the dark secrets of this new generation of AI mages.

After some poking around, found open source alternatives, with nearly as much expressive and generative power as DALL-E-2, read a few blogs, watched several videos, scanned a couple of github code repositories and then started experimenting…. and I’m (not) ashamed to say that I haven’t stopped since, it’s been at least a week.

AI Primer - Beginner

Quick primer on AI concepts & terms (beginners only, experts - don’t nitpick, just love me and skip to the next section). Don’t worry, you will not need any of this knowledge to generate images, the open source notebooks containing the generative AI models are ready to use and only require setting some parameters.

AI (and ML) are just fancy terms for the algorithms used to create these cool AI/ML models. Algorithms are written in computer software code (primarily using Python computer language) and are generally based on deep mathematical principles and methods (think Linear Algebra and statistics).

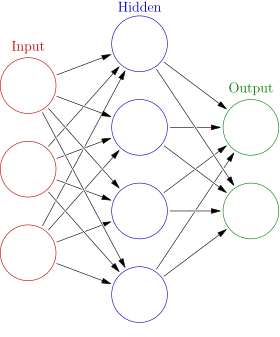

An AI model (again, just an algorithm) is made to suit a particular purpose by training it with a bunch of data (Wikipedia for text, or huge albums of images, etc.). Most modern AI models are composed of neural networks, a bunch of nodes connected in a network, as pictured below.

The neural networks, specifically the nodes and connections, are coded in a flexible manner (i.e. parameters) allowing them to change their properties as they are trained and fine-tuned by the training datasets. There are also more general parameters, named hyperparameters that allow you to configure global characteristics of your AI algorithm.

A notebook, or Jupyter notebook, or Google Colab notebook is a text editor that allows AI/ML engineers (and artists !) to write software code to create and train AI models and algorithms. Google Colab is a notebook subscription service with free, pro and pro plus tiers. Great things about notebooks, is the ability to share them with others.

AI Primer - Beginner++

Several additional concepts, more advanced.

Vector space / embedding - is a mathematical representation of an image, or word or sensor value or concept… as an array of values, usually numeric. When you provide training data, in the form of images, text corpus or whatever, you need to feed the AI model a particular formatted version of the training data, this is usually done by translating the input into a vector. Once you ‘vectorize’ a word or an image using a particular method (i.e. an embedding), then all that is required is a label for that input data that represents the topic/concept/entity you just vectorized, and you pretty much have everything necessary to supervise the training of most AI models / neural networks.

An example, a word can be represented by it’s position in a dictionary, hence a sentence can be represented by a vector of word positions…

or a small image, 16 pixels wide by 16 pixels high, can be represented by a 256 value vector (16 multiplied by 16) where each value is the pixel intensity of that part of the image.

Distance and Similarity - once you encode your input data as vectors, you can then imagine those vectors being mapped onto a multidimensional grid (i.e. vector space). Once the vectors are living in the same vector space, then you can perform interesting comparisons between the vectors, distance between vectors for example can be used as a proxy for ‘how similar’ two concepts (or words, or sentences, or images, etc.) are to each other, a semantic similarity. When you hear terms like cosine similarity, or Levenshtein distance, these are just different ways to determine how close or far (i.e. how similar) two concepts that have been vectorized (or encoded) are to each other.

GAN, generative adversarial network was invented by a young fellow named, Ian Goodfellow (see what I did there). The backstory is based on legend and folklore, and I’m going to totally butcher it, but its a cool story that helps explain the concept behind the GAN, so here it goes… it revolves around our buddy Ian drinking a few too many at a local bar (Les 3 Brasseurs, Montreal) with friends.

GAN, the backstory - Ian contemplated the implications of having two AI models trying to outcompete one another. What if, AI Model #1 generates fake images as AI model #2 is fed both fake images and real images and tries to distinguish if AI Model #1 images are real or fake. He likened it to an artist and an art critic, each trying to outduel each other, the artist showing generated images which he’s trying to pass off as real images, while the art critic is fed both real and the artist fake images and tries to determine which one is real and which one is fake (generated).

During training, the AI models (the artist and the art critic) will improve in both their skills and after enough iterations, the artist (or generator) will get better at creating fakes to fool the critic and, at the same time the art critic will get better at distinguishing fakes from real images. A competitive feedback loop is formed and several beers later, you’ll have the artist (i.e. AI model #1 - the generator) creating super realistic images and the art critic (i.e. AI model #2 - the critic) fine-tuning its ability to distinguish the fakes from the real ones.

PROMPT: “Young AI researcher with glasses sitting on a bar stool drinking beers at a bar, thinking deeply of generative adversarial model architecture resembling an artist and an art critic”

His friends told him that it wouldn’t work. He went home, slightly inebriated and coded a prototype in one pass on his laptop that night. It worked the first time… as rumor has it, and the legend forged. Arguably the biggest AI breakthrough since the invention of the Convolutional Neural Network but that’s another story (and battle).

CLIP - paper - blog - is an AI model authored by OpenAI trained by associating images with text. This model benefitted from earlier research that was able to show predictive power when embedding images, words and subsequently, image + word pairs in the same vector space. This embedding approach (image + word pair) provides the bridge between using Natural Language Processing (NLP) and Images.

VQGAN - paper - github blog - is an AI model authored by Patrick Esser, Robin Rombach, Bjorn Ommer. The VQGAN learns a codebook of context-rich visual parts combining the effectiveness of Convolutional Neural Networks (CNNs) with the power of the Transformer model architecture enabling it to generate high-resolution images.

VQGAN+CLIP - Let’s Begin Generating

VQGAN BLOG CLIP BLOG VQGAN+CLIP COLAB NOTEBOOK

Now back to business. After researching (well… googling), I discovered the open source availability of a powerful generative AI model, named VQGAN+CLIP. It’s actually two distinct models that are married together materializing a powerful image generation capability, just by providing a text prompt.

PROMPT: “During a snowstorm, etched into a mountainside, a large wooden door has Elvish letters etched in a magical emerald green glow | a map is also glowing etched into the door | rendered in Unreal Engine, v-ray”

To begin playing I would suggest the following Google Colab notebook titled VQGAN+CLIP(Updated). This notebook fixes some issues in older notebooks with outdated links. You can experiment with the free tier on Google Colab, but be forewarned, it will take a while to get a good rendering. I would suggest you start with small images, like 128x128 or 256x256 just to get your feet wet. Once you get the hang of it, and you feel like spending a little money, I would suggest you sign up for the Google Colab Pro tier (currently $10/month).

PROMPT: “View from above, inside an art studio an abstract artist works on five half-finished art canvases American Abstract”

The following is a quick walkthrough, assuming you have little or no experience with Google Colab, and notebooks in general.

VQGAN+CLIP GOOGLE COLAB NOTEBOOK WALKTHRU

VQGAN+CLIP COLAB NOTEBOOK

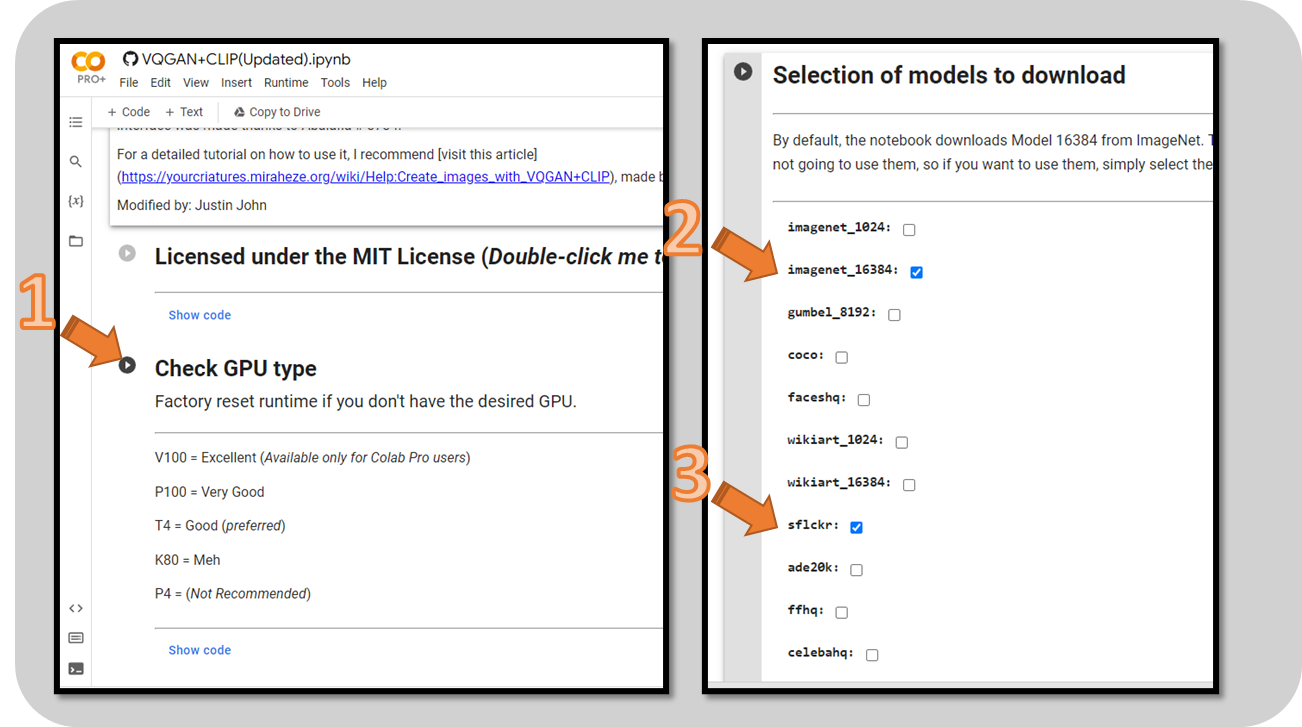

With notebooks, you need to run each block of code in sequence, by pressing the play buttons in the left hand side (see pic below). Click on the link to load up the notebook into your browser. Now follow the walkthrough to start generating images…

#1 - INITIALIZE RUNTIME - Press the play button near “Check GPU type” section, this will start a Google Colab runtime automatically for you and will run the ‘Check GPU type’ code block and print out which GPU (or CPU/TPU) has been assigned to you. NOTE: Every press of a play button arrow, will show a little animation around the play button while it’s executing - wait until it stops moving and you see the little green checkmark, indicating the code block has finished executing.

#2 - CHOOSE MODELS TO LOAD - Move to the next block, I would suggest you choose 2 models (during early experimentation), the imagenet_16384 model, which should be automatically checked…

#3 - Also make sure you check off the sflckr model, this provides excellent results. Now press the play button near the “Selection of models…” title.

This will take a while as the models are intially downloaded into your Google Colab runtime. Should take about 5-10 minutes.

#4 - LOAD LIBRARIES - Click “Loading libraries and definitions” play button and wait until it finishes executing before you go on to the next block.

#5 - SET PARAMETERS, IMAGE - Set your image width and height.

#6 - SET PARAMETERS, MODEL - Leave the ‘model’ as imagenet (default) or switch to sflckr (my favorite). If you have the free tier, then you may want to set the ‘images_interval’ lower from 50, to about 10 or 25. Once you’re done with the configurations, click the play button near the word ‘Parameters’. This will complete execution immediately .

#7 - DO MAGIC !! - Now press the “Fire up the AI” play button and wait for the MAGIC. You’ll begin seeing images get rendered below the code block. Images are generated all the time but will only show based on the “images_interval” you selected be printed at the rate

Resources, Links & Tips

PROMPT: “An old elvish magician with mystical green robes teaches a classroom full of rowdy orc children students in a wood cabin schoolhouse early morning eerie fog, Greg Rutkowski James Gurney, Nicolas Pierquin artstation”

PROMPT: “Outdoor dance club covered in graffiti, smoky, break dancers, boombox, DJ, record players, microphone 80s hip hop”

Image Quality - All of the prompt-based images shown in this post are low resolution (either 768x512 or 1024x512) and have not been upscaled to higher resolutions. Upscaling your generated image will provide higher quality images, see upscaler resources below.

Text Prompt Generation - Experiment with the way you construct your text prompts, you’ll be surprised how well the AI model can make a suitable image, no matter how complicated your text prompt is… be descriptive. See text prompt resources below.

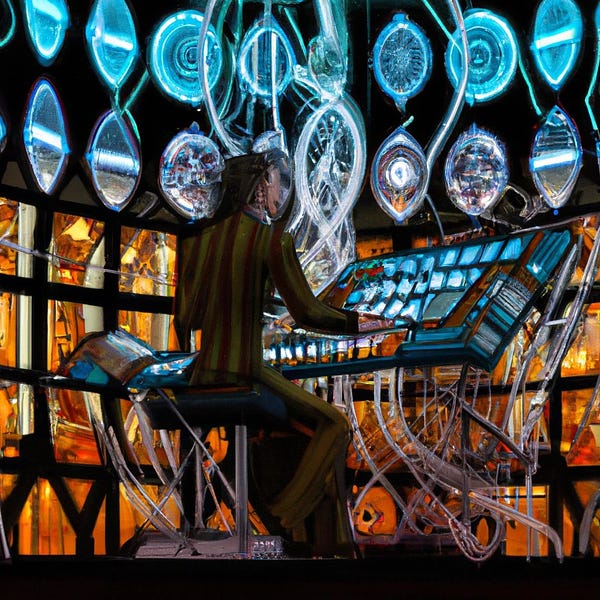

PROMPT: “Futuristic mozart composing a new digital symphony with a huge synthesizer made of stained glass and electricity”

Here’s a list of useful resources, links and tips, once you really get going and want to take your creations to the next level.

El Bestiario del Hypogripho Dorado - Text Prompt Guide - Spanish version - English Version - Excellent resource on authoring your text prompts for maximum effectiveness.

Katherin Crowson - RiversHaveWings Twitter - Github (crowsonkb) -AI/generative Artist - EleutherAI

Ryan Moulton Blog - Sacred Library - Doorways - Wonderful journey into art generation, narrative and story telling.

Artstation - Digital art platform - use this to find favorite artists whose names you can use in your text prompts.

REDDIT DeepDream channel - excellent tips, renderings and info

Image Upscalers - Google AI Blog- Real ESRGAN Github - AI image upscalers to increase the resolution of your generated images.

GANFolk - Rob A. Gonsalves Medium - Generative Art, Image Manipulation, artist, inventor, and engineer who researches and writes about the creative uses of AI.